Are you still building content around keywords? If so, you’re working with an SEO model that no longer reflects how search actually functions.

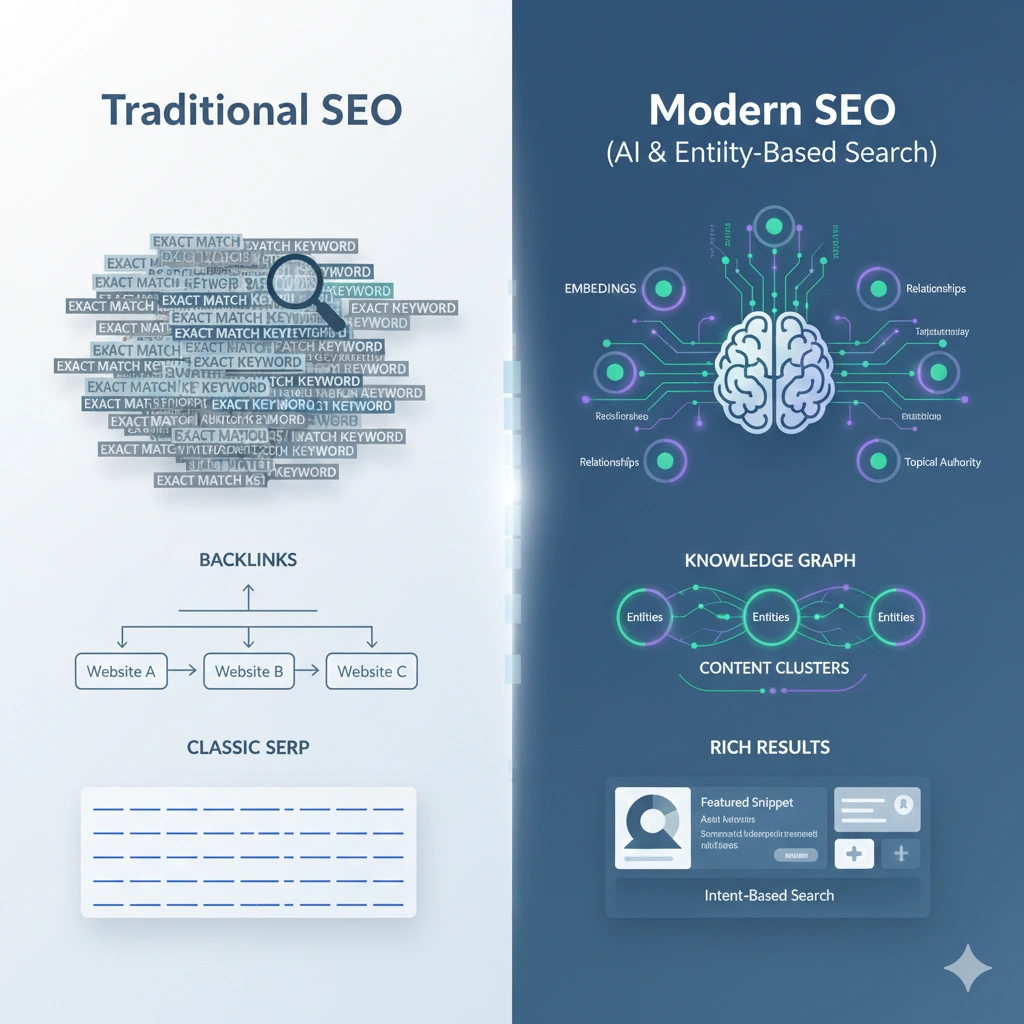

Traditional SEO relied on keyword targeting, page optimization, and backlinks. That approach worked when search engines ranked pages by matching words. Today, search systems operate very differently. Google, AI Overviews, and generative engines like ChatGPT and Perplexity don’t rank content based on keyword usage alone—they evaluate meaning, context, and authority.

SEO has shifted toward Generative Engine Optimization (GEO). Instead of rewarding pages that repeat keywords, modern search systems surface content that demonstrates topic understanding, entity coverage, and trust. Old techniques don’t fail because they’re wrong—they fail because they’re incomplete.

In this blog, I explain how SEO works beyond keywords, how generative engines evaluate content, and what actually drives visibility in modern search environments.

Why Keyword-First SEO Is No Longer Enough

Many websites continue to publish more content, target more keywords, and expand their blogs—yet rankings remain flat. The issue isn’t effort or consistency. It’s that keyword-focused SEO no longer reflects how search systems evaluate content.

Search engines don’t struggle to find pages that mention a topic. They struggle to determine which sources genuinely understand it. When content is built around isolated keywords, it often answers only the surface-level version of a query. As a result, it fails to perform for complex searches, follow-up questions, and conversational queries.

This gap becomes even more visible in AI-driven results. Google AI Overviews, ChatGPT, and other LLM-based systems don’t select sources based on keyword usage. They rely on context, entity relationships, and how thoroughly a topic is explained. Pages that lack depth may still be indexed, but they’re rarely cited or surfaced.

The missing factor is semantic depth—the ability of content to demonstrate real topic understanding. Without it, increasing content volume or refining keyword targeting delivers diminishing returns.

The Keyword Concept: How SEO Originally Worked

Early SEO was built on a simple assumption: if a page contained the same words a user searched for, it deserved to rank. Search engines relied heavily on keyword matching to determine relevance, with little ability to interpret meaning or context.

To strengthen those signals, SEO practices focused on measurable factors such as keyword density and placement. Terms were inserted into titles, headings, URLs, and body copy to increase visibility. Backlinks acted as a secondary layer of validation, reinforcing relevance through external references rather than content understanding.

This model worked because search engines were limited. They could identify patterns, count occurrences, and measure link popularity, but they couldn’t evaluate whether a page actually understood a topic. As a result, surface-level optimization often outperformed more thoughtful explanations.

The limitation of this approach became clear as search behavior evolved. Keyword-optimized pages answered basic queries but struggled with nuance, comparisons, and follow-up questions. As search systems grew more sophisticated, relevance could no longer be determined by word matching alone.

Traditional SEO vs Modern SEO

Search engines didn’t abandon traditional SEO overnight. The shift happened gradually as systems became better at understanding language, context, and intent. The difference between traditional and modern SEO lies in how relevance is evaluated.

Traditional SEO Model

Traditional SEO focused on optimizing individual pages to match specific keywords. Relevance was established primarily through keyword usage and reinforced by backlinks pointing to the page.

Optimization efforts centered on:

- Keywords and anchor text

- Page-level signals such as titles, headings, and meta tags

- Link acquisition as a proxy for authority

Example:

A medical website might create a page optimized for the keyword “best doctor in India”. The page repeats the term in headings, meta tags, and content, and builds backlinks using the same anchor text. Ranking depended more on keyword alignment and links than on medical credibility or topic coverage.

Content was usually treated as a standalone asset, often ignoring deeper medical context or related patient concerns.

Modern SEO Model

Modern SEO evaluates whether content satisfies search intent and demonstrates genuine topic understanding. Instead of ranking pages based on keyword repetition, search engines analyze how well content explains concepts, relationships, and real-world expertise.

Key characteristics of modern SEO include:

- Intent-based retrieval instead of exact keyword matching

- Entity recognition to identify people, conditions, treatments, and locations

- AI-assisted evaluation using language models and contextual signals

Example:

The same medical website now publishes content around the entity “Doctor”, connected to related entities such as:

- Medical specialization (cardiologist, dermatologist)

- Conditions treated

- Symptoms

- Treatments and procedures

- Hospital affiliation

- Location and credentials

Even if the phrase “best doctor in India ” appears rarely—or not at all—the content can still surface if it clearly explains expertise, experience, and patient relevance.

From Keywords to Meaning: How Search Has Evolved

Search didn’t move beyond keywords because they stopped working. It moved on because keywords alone couldn’t explain meaning. As search systems matured, the focus shifted from matching words to understanding what those words represent.

This evolution can be seen as a progression:

Keywords → Intent → Entities → Semantic Depth

In early search, keywords were treated as exact signals. If a page contained the same words as a query, it was considered relevant. Over time, search engines began asking a different question: What is the user actually trying to accomplish?

That shift introduced intent. Queries were no longer isolated strings of text but expressions of needs—informational, navigational, or transactional.

Intent alone still had limits. To interpret intent accurately, search engines needed to understand things, not just phrases. This led to entity-based understanding, where people, places, professions, conditions, and concepts are identified and connected.

Semantic depth is the final layer. It measures how well content explains a topic, connects related ideas, and covers the subject beyond a single query.

Timeline of Google’s Evolution

Google’s major updates reflect this shift away from keyword dependence:

- Hummingbird focused on understanding entire queries instead of individual words. It allowed Google to process natural language more effectively.

- RankBrain introduced machine learning to interpret unfamiliar or ambiguous searches by mapping them to known concepts.

- BERT improved contextual understanding, especially how words relate to each other within a sentence.

- MUM expanded this capability further by connecting information across formats and languages.

Each update reduced the importance of exact keyword matching and increased the value of context and comprehension.

Helpful Content & AI Systems

Recent systems prioritize whether content is genuinely useful, not just optimized. Pages are evaluated on clarity, completeness, and alignment with user expectations.

This is where “SEO beyond keywords” becomes practical. Content that explains a topic thoroughly, uses consistent terminology, and reflects real expertise is more likely to surface—whether in traditional search results or AI-generated responses.

Search today rewards understanding, not repetition.

What Is Semantic Depth in SEO?

Semantic depth describes how well a piece of content explains a topic, not how many words it contains. Length alone doesn’t create relevance. Understanding does.

Content with real semantic depth does a few specific things:

- Defines core entities clearly

It identifies what the topic is actually about—people, concepts, processes, or objects—and removes ambiguity. - Explains the attributes of those entities

It describes characteristics such as function, role, scope, limitations, and context. - Shows relationships between related concepts

It connects entities logically instead of presenting them in isolation. - Covers the topic from multiple angles

It addresses how, why, when, and where the topic matters, not just what it is.

Example: Doctor Content With vs Without Semantic Depth

A shallow page might target the keyword “best doctor in India” and include:

- Repeated mentions of the phrase

- A short bio

- A list of services

- Contact details

Even if the page is long, it doesn’t explain much.

A page with semantic depth treats Doctor as an entity and expands around it by:

- Defining the doctor’s specialization and scope of practice

- Explaining conditions treated and related symptoms

- Describing treatments and procedures and when they are used

- Connecting the doctor to hospital affiliation, credentials, and experience

- Addressing patient intent, such as diagnosis, recovery, and risks

The keyword may appear only once—or not at all—but the topic is fully explained.

From a search and AI perspective, the second page demonstrates subject understanding. The first only signals optimization.

That difference is semantic depth.

Semantic Depth vs Topical Authority

Semantic depth and topical authority are often used interchangeably, but they solve different problems in search. One focuses on how deeply a topic is explained, the other on how broadly a subject is covered.

What Is Topical Authority?

Topical authority refers to the overall coverage of a subject area across multiple pieces of content. It’s built when a site consistently publishes content that addresses the full range of questions, subtopics, and use cases related to a theme.

Topical authority is established through:

- Breadth across a subject, not a single page

- Consistent terminology and concepts across pages

- Internal connections between related topics

Doctor example:

A healthcare website aims to build authority around cardiology. It publishes separate pages on:

- Chest pain and related symptoms

- Diagnostic tests such as ECG and angiography

- Treatment options, including medication and surgery

- Lifestyle changes and prevention

- Post-treatment care and recovery

What Is Semantic Depth?

Semantic depth exists within individual pieces of content. It measures how well a single page explains a topic, clarifies entities, and connects related concepts.

A page with semantic depth:

- Defines what the topic is and what it is not

- Explains attributes, processes, and limitations

- Addresses user intent beyond surface-level answers

Without semantic depth, even a well-linked page remains shallow.

Doctor example:

A page titled “What Does a Cardiologist Do?” demonstrates semantic depth when it:

- Defines the role and scope of a cardiologist

- Explains conditions treated and symptoms evaluated

- Describes diagnostic methods and treatment decisions

Clarifies when referral or surgery is required

This page doesn’t rely on keyword repetition. It relies on explanation.

How They Work Together

Breadth without depth creates weak authority. Publishing many pages that lightly touch a topic signals coverage but not understanding.

Depth strengthens authority by proving expertise at the page level. When multiple deep pages are connected through a clear topical structure, search systems recognize the site as a reliable source.

In modern search, authority isn’t earned by volume alone. It emerges when deep explanations are organized within a clear topical framework.

Why Semantic Depth Matters for Rankings Today

Semantic depth helps search systems determine whether a page is a complete and reliable answer, not just a relevant match. As ranking systems become more context-driven, shallow content struggles to compete—even if it’s well optimized.

Semantic depth directly improves several ranking signals.

Intent Satisfaction

Search engines evaluate whether a page actually resolves the user’s intent. Content that explains concepts clearly, addresses follow-up questions, and removes ambiguity is more likely to satisfy that intent. Pages that only repeat surface-level information often fail to meet this threshold.

Knowledge Graph Alignment

When content clearly defines entities and their attributes, it aligns more easily with how search engines store and retrieve information. This improves the chances of being associated with the correct entities and relationships, strengthening relevance beyond keyword matching.

Visibility for Long-Tail and Complex Queries

Semantically deep content naturally surfaces for varied and complex queries. Because the topic is explained thoroughly, the page can match multiple expressions of the same intent—even when the wording differs significantly from the original query.

Selection for AI-Generated Answers

AI-generated responses depend on context. Language models favor content that explains relationships, clarifies meaning, and provides structured understanding. Shallow pages rarely provide enough context to be selected or summarized accurately.

In modern search environments, rankings are increasingly determined by how well a topic is understood, not how precisely keywords are placed.

Entities and Relationships: The Core of Modern Search

Modern search focuses on entities and their relationships, not just keywords. Understanding these is essential to creating content that ranks in AI-driven search.

What Are Entities?

An entity is a clearly defined concept—such as a person, profession, brand, place, or idea—that can be uniquely understood and referenced. Entities represent meaning, not just words.

Example: For a Doctor:

- Doctor

- Specialization

- Hospital

- Treatment

Attributes and Properties

Entities have attributes that describe their characteristics, functions, or qualities. These give search engines context and allow content to explain what makes an entity unique.

Example:

- Doctor → Specialization, Experience, Certifications

- Hospital → Location, Departments, Ratings

- Treatment → Type, Duration, Effectiveness

How Relationships Build Meaning

Entities are connected. Understanding these relationships allows search engines to map content in a way that reflects real-world knowledge.

Example:

- Doctor → Works at → Hospital

- Doctor → Treats → Patient Conditions

- Treatment → Part of → Care Plan

Knowledge Graph Mapping

When entities, attributes, and relationships are clearly defined, content can be mapped to Google’s Knowledge Graph, improving:

- Accuracy in search results

- Chances of appearing in featured snippets

- Likelihood of AI-generated citations

By structuring content this way, you create semantic depth that mirrors real-world understanding, making your content easier for both search engines and AI systems to interpret, retrieve, and trust.

How Search Engines Evaluate Semantic Depth

Modern search engines no longer rely solely on keywords. Instead, they assess whether content demonstrates real understanding of a topic. Google does this using several AI-powered systems:

- Knowledge Graph: Maps entities, their attributes, and relationships to understand context.

- RankBrain: Uses AI to understand what users are searching for and show relevant results..

- BERT: Understands natural language and subtle nuances in text.

- MUM (Multitask Unified Model): Integrates multi-modal information and complex connections across topics.

Together, these systems evaluate whether content reflects true mastery of a subject. Pages that clearly define entities, explain attributes, and show relationships are more likely to appear in:

- Organic search results: Ranking higher for a variety of related queries

- Featured snippets: Providing concise, authoritative answers

- AI Overviews: Being cited by generative search tools

- Generative responses: Selected as sources for AI-powered answers

By structuring content this way, your pages mirror real-world knowledge, making them easier for search engines and AI systems to interpret, retrieve, and trust. This is the essence of semantic depth and why it gives your content a visibility advantage over shallow, keyword-focused pages.

EEAT as a Trust Multiplier (Not a Ranking Trick)

EEAT is no longer just a buzzword—it’s a framework that signals trust and credibility to both users and AI-powered search systems. Unlike traditional ranking tricks, EEAT shows that your content is reliable, accurate, and authoritative.

Experience

Content should reflect real-world experience. Case studies, first-hand examples, or practical insights demonstrate that your content comes from someone who has actually done or seen the subject in action.

Example: A doctor writing about cardiology shares patient care insights, treatment considerations, or procedural experiences.

Expertise

Expertise shows that the author has specialized knowledge in the topic. Credentials, certifications, or deep domain understanding signal to readers and AI systems that the content is trustworthy.

Example: A certified nutritionist writing about intermittent fasting vs. a general wellness blogger.

Authoritativeness

Authoritativeness comes from recognition by others in the field. This includes citations, backlinks from reputable sites, media mentions, or references in academic research.

Example: A healthcare article referenced by hospitals or medical associations.

Trustworthiness

Trustworthiness is about accuracy, transparency, and reliability. Proper sourcing, clear citations, disclaimers, and honest advice help users—and AI systems—know they can rely on your content.

Example: Clearly noting potential side effects of a medical treatment or providing updated data sources.

Why EEAT Matters More in AI-Generated Answers

AI systems, like Google’s AI Overviews or ChatGPT, prefer authoritative and verifiable sources. Pages that demonstrate EEAT are far more likely to be cited or summarized because they provide reliable context. Shallow or unverified content is less likely to appear, no matter how well-optimized for keywords.

Krishnendu (Kriz) is a freelance SEO expert, digital marketing strategist, and SEO trainer with 3+ years of hands-on experience in the SEO and digital marketing industry. I currently serve as a Trainer and Head of Department (HOD) at Clear My Course Digital Marketing Institute, where I mentor aspiring marketers with a strong focus on practical, real-world SEO.

I’ve successfully handled 50+ SEO projects across multiple industries, working with startups, local businesses, and service-based brands to improve organic visibility, search rankings, and lead generation. My experience includes both agency-level execution and independent consulting, allowing me to build scalable and sustainable SEO strategies rather than short-term fixes.

As an SEO trainer and HOD, I’ve trained 100+ students, guiding them through foundational SEO concepts as well as advanced frameworks like semantic SEO, entity relationships, AI search behavior, and content optimization for long-term authority. My training approach is rooted in live projects, audits, and real ranking scenarios, not just theory.

Through my blog, YouTube channel, and professional work, I share practical SEO insights, strategy-driven content, and up-to-date perspectives on evolving search algorithms, AI-powered search, entity SEO, E-E-A-T, and GEO—helping business owners, marketers, and students make informed decisions and build long-term digital credibility.